The $600+ Billion Demand Wall: Why Nvidia Remains the Most Mispriced Mega-Cap in Tech [Nvidia Q4 FY26 Earnings Preview]

Welcome, AI & Semiconductor Investors,

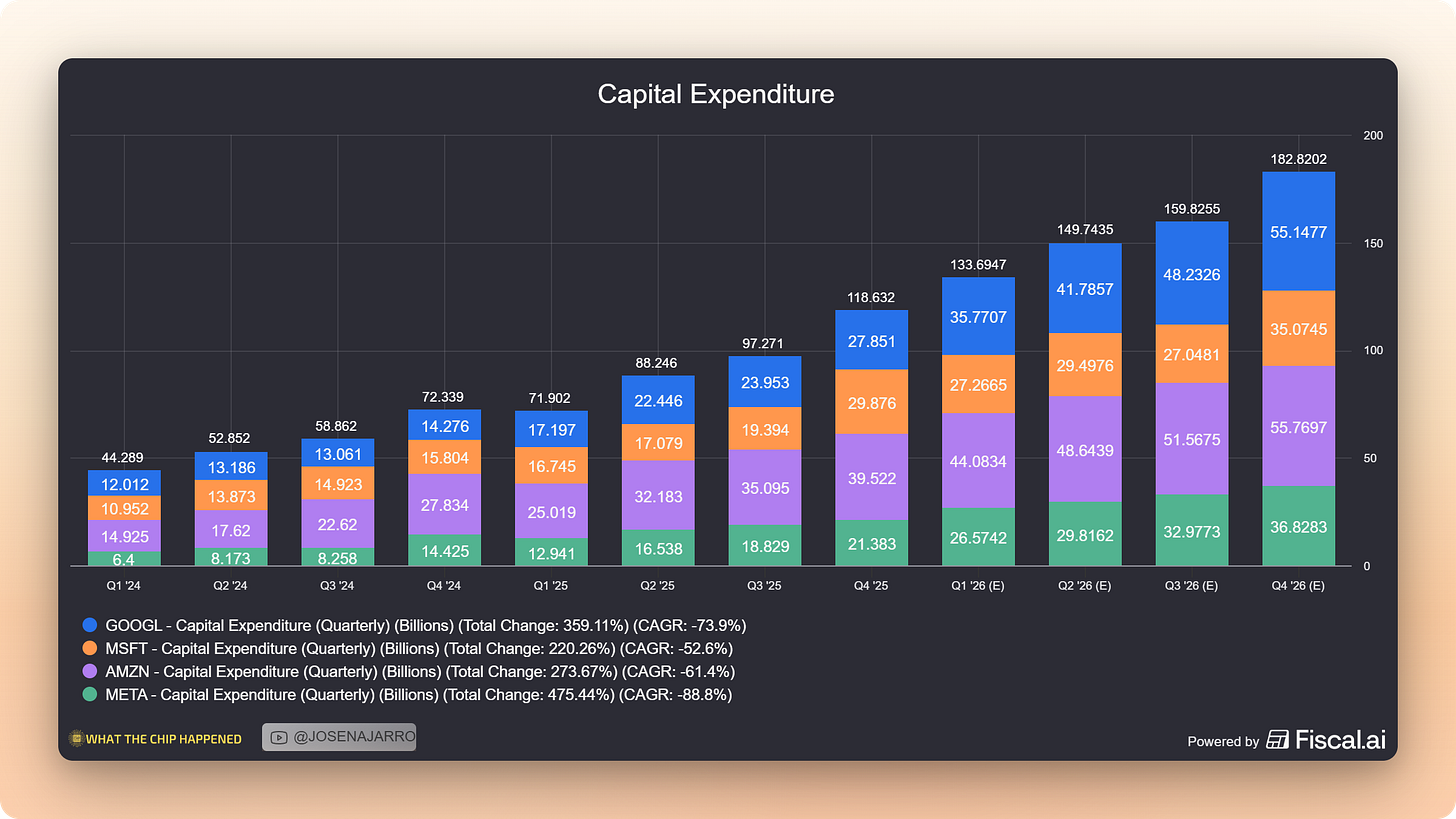

This will be a comprehensive analysis of Q4 FY2026 earnings expectations and why the hyperscaler CapEx supercycle makes the bear case untenable. You can find more research like this at our AI Community - WhatTheChipHappened.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

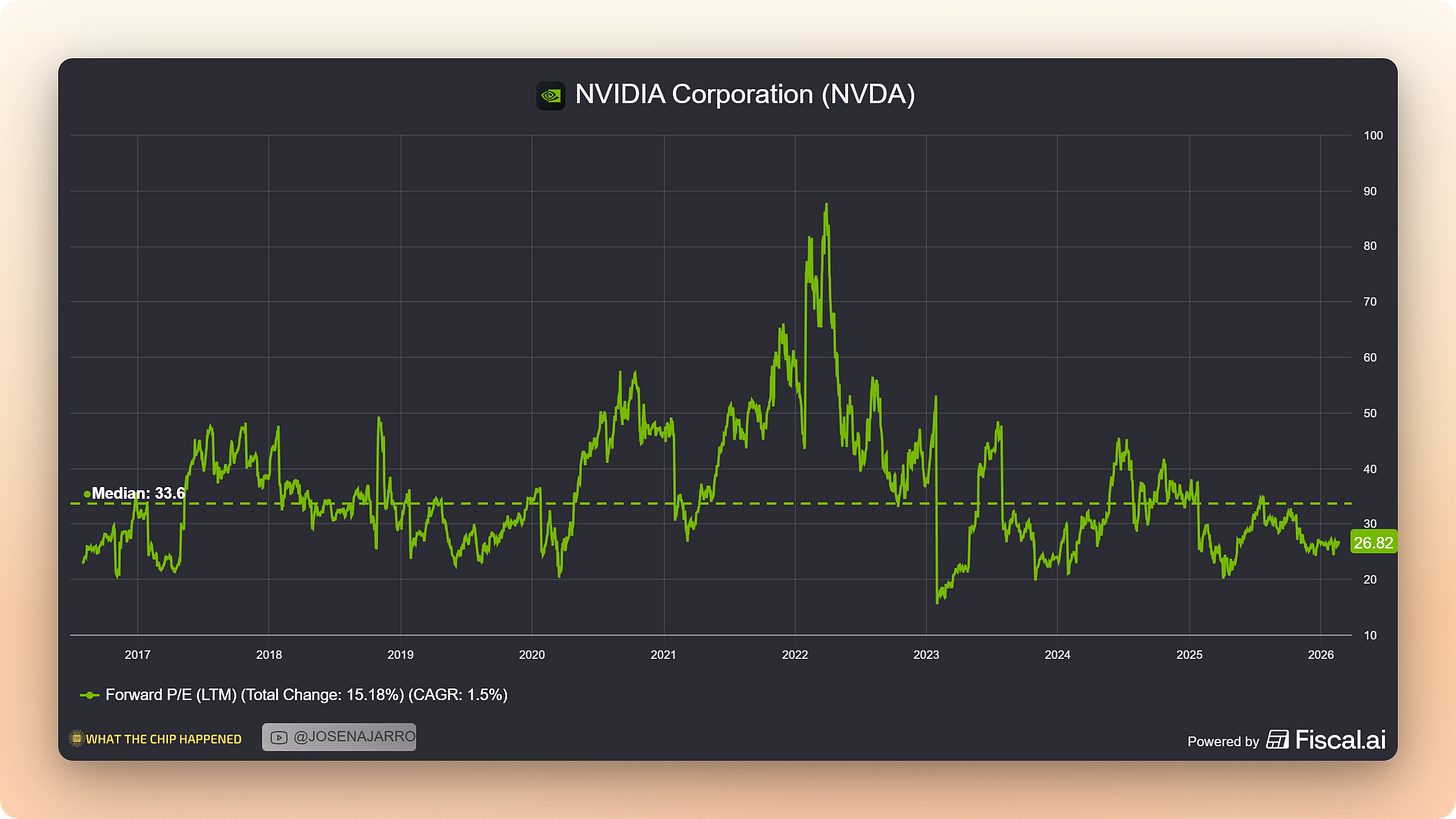

With Nvidia trading at ~26x forward earnings and AI bubble fears reaching fever pitch, bears argue the stock is priced for perfection ahead of Q4 FY2026 earnings. Dot-com comparisons are proliferating, custom silicon competition is accelerating, and skeptics question whether hyperscaler ROI can justify the spending.

Our View: EXTREMELY BULLISH

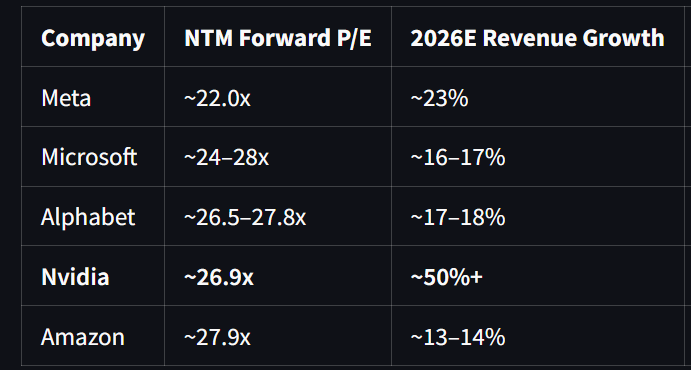

The bear case fundamentally misreads the supply-demand picture. Four hyperscalers simultaneously confirmed they are supply-constrained for AI compute. Over $660 billion in CapEx is committed for 2026, up dramatically from 2025 levels. TSMC’s CEO personally validated demand with every major cloud customer before committing to a $52-56 billion capex cycle to fuel semiconductor manufacturing. And $150 billion in AI lab funding has been raised in just the first seven weeks of 2026, with capital explicitly earmarked for Nvidia GPUs. At ~26x forward P/E with 50%+ revenue growth, Nvidia may be the cheapest it has been relative to its growth in this entire AI cycle.

Key Evidence:

• Every major hyperscaler stated demand exceeds supply — they will buy every GPU Nvidia ships

• TSMC wafer/advanced packaging supply is the bottleneck through 2028, preserving Nvidia’s pricing power indefinitely

• At ~26x NTM P/E with 50%+ growth, one of the cheapest profitable growth stocks.

• China sales represent pure upside optionality — AMD already reported Q4 China chip sales, confirming the regulatory pathway

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Note: This does not include capex from Neocloud, AI labs, and enterprise players, both public and private.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

THE BEAR CASE

Before making the bullish argument, intellectual honesty demands we examine what critics are saying, and why their concerns, while understandable, are ultimately not supported by the evidence.

The AI bubble narrative has intensified in recent months. The Case-Shiller price-to-earnings ratio for the U.S. market exceeded 40 for the first time since the dot-com crash. Michael Burry, the investor who famously shorted subprime mortgages—warned that the AI buildout is following similar “patterns” to the late 1990s. Speculation has emerged that leading AI tech firms are involved in circular investment flows artificially inflating stock values: Nvidia invests in OpenAI, OpenAI buys Nvidia chips, Nvidia revenue grows, repeat.

The custom silicon competition narrative has also gained traction. All four hyperscalers are developing proprietary chips: Google’s TPUs, AWS Trainium, Microsoft Maia, and Meta’s MTIA. Amazon disclosed that Trainium is now a $10 billion-plus annualized run-rate business, growing triple digits. The concern: Nvidia’s moat is eroding as customers vertically integrate.

ROI skepticism persists despite the spending. Reports indicate that 95% of organizations are seeing zero return on GenAI investments. If hyperscaler CapEx fails to generate adequate returns, the spending could prove unsustainable.

Finally, valuation sensitivity creates asymmetric risk, or so the bears argue. The stock is priced for perfection. Any guidance miss could trigger a significant selloff.

Why these concerns are gaining traction:

• Historical pattern recognition—dot-com parallels are intellectually seductive

• Custom silicon announcements create credible-sounding competitive threats

• The magnitude of spending ($600B+) seems unsustainable on its face

• Nvidia’s stock has already appreciated significantly—”how much higher can it go?”

We take these concerns seriously. Each deserves evidence-based responses. But when we examine what hyperscaler executives, TSMC’s CEO, and the broader supply chain are actually saying, in their own words, a very different picture emerges.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

THE HYPERSCALER SUPPLY CONSTRAINT

The single most important data point for Nvidia investors is this: all four hyperscalers, Meta, Alphabet, Microsoft, and Amazon, independently confirmed on their Q4 2025 earnings calls that AI compute demand currently exceeds their ability to supply it.

A supply-constrained customer will take every unit Nvidia can ship. This is not theoretical demand or speculative ordering. These are four of the largest, most sophisticated technology infrastructure buyers in the world, representing approximately 75% of global cloud compute, all saying the same thing simultaneously.

Meta’s CFO Susan Li was explicit during their January 28 earnings call:

“We do continue to be capacity constrained. Our teams have done a great job ramping up our infrastructure through the course of 2025. But demands for compute resources across the company have increased even faster than our supply. So we expect over the course of 2026 to have significantly more capacity this year as we add cloud. But we’ll likely still be constrained through much of 2026 until additional capacity from our own facilities comes online later in the year.”

Meta guided to $115–135 billion in 2026 CapEx—up 59–87% year-over-year—and they still expect constraints.

Alphabet’s Sundar Pichai echoed this on February 4. When asked directly about supply dynamics, he did not hedge:

“You are right, and we’ve been supply constrained even as we’ve been ramping up our capacity... I do expect to go through the year in a supply-constrained way.”

He described compute capacity as the single biggest thing that keeps him up at night:

“The top question is definitely around compute capacity, all the constraints, be it power, land, supply chain constraints, how do you ramp up to meet this extraordinary demand for this moment.”

Alphabet guided to $175–185 billion in 2026 CapEx—nearly double 2025 levels.

Microsoft provided the most granular GPU spending disclosure of the group. CFO Amy Hood stated on January 28:

“Capital expenditures were $37.5 billion, and this quarter, roughly 2/3 of our CapEx was on short-lived assets, primarily GPUs and CPUs. Our customer demand continues to exceed our supply.”

Two-thirds of $37.5 billion, approximately $25 billion, is spent on GPUs and CPUs in a single quarter. Microsoft then revealed what Azure growth would have been with unconstrained supply:

“If I had taken the GPUs that just came online in Q1 and Q2 and allocated them all to Azure, the KPI would have been over 40.”

Azure grew 39%. GPU supply is literally constraining Microsoft’s revenue growth.

Amazon’s Andy Jassy committed to approximately $200 billion in 2026 CapEx during the February 5 call:

“We expect to invest about $200 billion in capital expenditures across Amazon, but predominantly in AWS because we have very high demand, customers really want AWS for core and AI workloads, and we’re monetizing capacity as fast as we can install it.”

The phrase “monetizing capacity as fast as we can install it” is the most bullish demand signal a supplier can hear.

The takeaway: When demand exceeds supply across every major customer simultaneously, pricing power accrues to the supplier. The question is not “will demand hold?” but “how fast can TSMC manufacture these AI Chips?”

AI & CHIP STOCK RESEARCH COMMUNITY — CLICK HERE FOR 33% OFF

Get 15% OFF FISCAL.AI — ALL CHARTS ARE FROM FISCAL.AI —

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

THE SUPPLY CHAIN SAYS DEMAND IS REAL

The most compelling independent validation of Nvidia’s demand thesis comes not from Nvidia itself, but from TSMC, the sole manufacturer of every advanced Nvidia GPU. CEO C.C. Wei’s January 15 earnings call contained what may be the single most important passage for understanding the durability of AI infrastructure demand.

Wei addressed the bubble question head-on, acknowledging his own nervousness before revealing what convinced him:

“You essentially try to ask us, say, whether the AI demand is real or not. I’m also very nervous about it. You bet because we have to invest about $52 billion to $56 billion for the CapEx, right? If we didn’t do it carefully, that would be big disaster to TSMC for sure. So of course, I spend a lot of time in the last 3, 4 months talking to my customer and end customers’ customer. I want to make sure that my customers demand are real. So I talked to those cloud service providers, all of them. The answer is that I’m quite satisfied with the answer. Actually, they show me the evidence that the AI really help their business.”

This is not a chip company repeating what customers tell them. This is TSMC’s CEO personally validating demand by speaking directly with the hyperscalers’ customers, the enterprises, and AI labs actually deploying the compute. He found the evidence satisfactory enough to commit over $50 billion in capital.

On the longevity of the cycle, Wei was remarkably candid:

“All in all, I believe in my point of view, the AI is real, not only real, it’s starting to grow into our daily life... you—another question is can the semiconductor industry to be good for 3, 4, 5 years in a row, I’d tell you the truth, I don’t know. But I look at the AI, it looks like it’s going to be like an endless, I mean, that for many years to come.”

Critically, Wei identified where the bottleneck lies, and it is not demand:

“Today, from my point of view, still the bottleneck is TSMC’s wafer supply. Not the power consumption, not yet... TSMC’s wafer can support how much of the gigawatt, still not enough.”

He then provided the timeline for relief:

“If you build a new fab, it takes 2 to 3 years to build a new fab. So even we start to spend the $52 billion to $56 billion, the contribution to this year almost none and to 2027, a little bit. So we actually are looking for 2028, 2029 supply.”

The memory supply chain tells the same story. Micron CEO Sanjay Mehrotra stated on December 17:

“We are more than sold out. I mean, we have a significant amount of unmet demand in our models... the demand on us is so much higher than the supply, that even small increases in supply are not going to be able to make a dent in that demand.”

Micron’s entire HBM supply for 2026 is already contracted with volume and price locked.

Applied Materials CEO Gary Dickerson added the capstone on February 12:

“With the accelerated growth of AI end markets, we believe that global semiconductor industry revenues can potentially reach $1 trillion in 2026, several years earlier than prior predictions.”

The takeaway: When TSMC commits $52–56 billion in CapEx after personally validating demand with every hyperscaler, when Micron is “more than sold out” on HBM with “significant unmet demand,” and when Applied Materials sees the $1 trillion semiconductor milestone arriving four years early, this is not speculative froth. This is industrial-scale conviction backed by capital commitments. The supply constraint will persist through 2028, meaning Nvidia retains pricing power for at least the next 2 years.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

THE NEOCLOUD AND AI LAB DEMAND LAYER

Beyond the hyperscalers, a massive secondary demand wave is emerging from neocloud providers and AI labs, representing over $150 billion in fresh capital raised in early 2026 alone, with the explicit destination being Nvidia GPU purchases.

Nebius CEO Arkady Volozh described the demand picture on his recent earnings call:

“Demand remains robust, and our pipeline continues to grow substantially. We sold out of capacity in Q3 and Q4 last year, and we’re already now in Q1 of 2026 also sold out. Even before we bring capacity online, it’s often sold out.”

He added:

“Everything we build, we sell. We are in the very early days of one of the biggest industrial and technological revolutions in history.”

A critical data point counters the concern that older GPU generations will become stranded assets. IREN COO Kent Draper provided definitive evidence:

“If you look more broadly across the industry, if you think of A100, H100s, those are more than 5 years old and more than 3 years old, respectively now. Those chips are still effectively 100% utilized across the industry and still earning very good rates of return against their original capital costs.”

AWS CEO Matt Garman confirmed the same at Cisco 2026 AI Summit:

“We actually are completely sold out of and have never retired an A100 server.”

Contract durations are lengthening, signaling customer confidence in multi-year demand. Nebius CRO Marc Boroditsky noted:

“In Q4, we saw nearly twice as many transactions completed over 12 months in duration over what we succeeded with in Q3, while average selling prices increased by more than 50%.”

When customers sign longer contracts at higher prices, they are voting with capital on demand durability.

The AI lab funding picture is staggering. OpenAI is nearing a deal to raise more than $100 billion. Anthropic closed a $30 billion Series G on February 12 at a $380 billion valuation, the second-largest venture round ever. Elon Musk’s xAI started the year with a $20 billion Series E. Combined early 2026 funding from just these three labs exceeds $150 billion.

Where does this capital go? The reporting is explicit: “Much of OpenAI’s new capital is likely to be used to purchase Nvidia chips to power OpenAI’s AI models.” These startups don’t build their own data centers, they rent from AWS, Azure, and GCP.

The takeaway: The “circular AI financing” concern is overstated. The hyperscalers are funding their CapEx from operating cash flows and balance sheets, profitable companies deploying existing capital, not leveraged speculation.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

THE VALUATION DISCONNECT

At approximately 26x forward earnings with 50%+ revenue growth expected in FY2027, Nvidia trades in-line with the Magnificent 7 average despite dramatically superior growth. The market is pricing in excessive risk that the data does not support.

The earnings trajectory tells the story clearly. Wall Street expects Q4 fiscal 2026 earnings of approximately $4.69 per share. If Nvidia hits that number, the stock at $180 trades at roughly 38x trailing earnings, which sounds elevated. But analysts expect fiscal 2027 earnings to reach $7.66 per share, placing the stock at just mid 20s forward earnings on next year’s numbers.

Why is the market mispricing this? Several factors contribute. AI bubble fears create an excessive risk premium that depresses multiples. China uncertainty keeps estimates conservative, any revenue is excluded from guidance. The custom silicon narrative creates perceived competitive threat that the data does not support. And Nvidia has historically beaten and raised guidance, meaning consensus estimates likely understate actual results.

The critical timing observation: the hyperscaler CapEx commitments that support the bull case were made after Nvidia’s Q3 earnings. Meta, Alphabet, and Amazon all reported in late January and early February, after Nvidia guided. The demand environment is strengthening, not weakening. If the market fully incorporated the incremental demand signals, the stock would trade higher.

The takeaway: If Nvidia delivers Q4 in-line and guides Q1 FY27 above $74 billion (versus ~$71.6 billion consensus), the stock could re-rate over 10-15% simply on multiple expansion as AI bubble fears dissipate. At current prices, the risk-reward is asymmetric in favor of longs.

AI & CHIP STOCK RESEARCH COMMUNITY — CLICK HERE FOR 33% OFF

Get 15% OFF FISCAL.AI — ALL CHARTS ARE FROM FISCAL.AI —

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

THE CHINA OPTIONALITY

AMD’s Q4 earnings demonstrated that chip sales to China are flowing again with MI308, including licenses for MI325 accelerators. This provides a critical proof point: if AMD is selling chips to China, Nvidia’s H20/H200 approvals should translate into material revenue, revenue currently excluded from Nvidia’s guidance.

Nvidia CEO Jensen Huang’s first visit to China in 2026 represented a significant shift. Beijing has reportedly approved the sale of 400,000 H200 chips to China’s top AI firms. Reuters indicated that Nvidia has already received more than 2 million orders for H200 chips for 2026.

At an estimated ASP of $25,000–30,000 per H200, 400,000 approved chips represent $10–12 billion in potential revenue. If Nvidia has 2 million orders (per Reuters), the total addressable opportunity is $50–60 billion, against zero in current guidance.

The AMD proof point de-risks the thesis. If one U.S. AI chip company is successfully navigating China sales, there is no regulatory reason Nvidia cannot do the same.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

HISTORICAL CONTEXT

The dot-com comparison deserves direct address. Yes, the Case-Shiller P/E ratio exceeded 40 for the first time since the dot-com crash. Yes, AI spending growth rates echo 1990s infrastructure buildouts. But the structural differences matter more than surface similarities.

First, real earnings versus projections. During the dot-com bubble, companies were valued on eyeballs and revenue projections that never materialized. Today’s AI leaders are already profitable. Nvidia’s earnings grew 67% year-over-year in Q3 while revenue grew 62%. Meta, Alphabet, Microsoft, and Amazon are deploying operating cash flow into AI infrastructure. Today’s AI giants have lower debt-to-earnings ratios than WorldCom or the other poster children of 1999.

Second, CapEx as percentage of GDP remains below historical peaks. AI capex has recently equated to approximately 0.8% of GDP. During other technology booms, peak levels reached 1.5% or greater.

Third, physical assets are being built. Unlike the 1990s, today’s investment is heavily capex-driven: data centers, GPUs, networking equipment, power systems. AWS disclosed nearly 4 gigawatts of power capacity added in 2025 alone, real infrastructure with 20–30 year useful lives.

Fourth, post-Sarbanes-Oxley standards prevent the accounting fraud that inflated dot-com demand.

The lesson from history: Bubbles are characterized by leverage, fraud, and disconnection from fundamentals. The current AI infrastructure buildout is characterized by operating cash flow, audited financials, and supply-constrained demand. The comparison does not hold.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

SCENARIO ANALYSIS

We weight the bull and base scenarios at 95% combined probability. The hyperscaler CapEx announcements since Q3 suggest guidance will surprise to the upside, not the downside. The 5% bear scenario probability reflects execution risk and potential macro disruption, not demand concerns.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

KEY MANAGEMENT COMMENTARY

Microsoft (MSFT) — Cisco AI Summit 2026

“I just don’t see how the demand for inference is gonna go down. And it’s hard to imagine, given what the silicon situation is, the hardware situation, how difficult it is to build data centers and deploy power and all the things that you wanna do, how you get ahead of that anytime soon.” — Kevin Scott, CTO

This quote addresses the “efficiency will reduce demand” concern directly — Microsoft’s CTO sees no scenario where inference demand declines.

Alphabet (GOOGL) — Cisco AI Summit 2026

“Deep partnership with NVIDIA — a lot of our success at Google has been as a result of that partnership with NVIDIA and GPUs... Every efficiency we deliver — and it’s the rate of improvement on the efficiency side, I’ve never seen anything like it — but it gets consumed instantaneously.” — Amin Vahdat, Chief Technologist

This is the Jevons Paradox in real-time: efficiency gains get immediately absorbed by expanding workloads, not by reduced purchasing.

Amazon (AMZN) — Q4 FY2025 Earnings Call

“Data centers are a 20-30-year amortization timeline. The power, you have to make commits for long periods of time. I’m gonna have this asset for 20 years or 30 years.” — Matt Garman, CEO AWS

The multi-decade commitment horizon signals this is not cyclical spending that can be quickly reversed.

TSMC (TSM) — Q4 2025 Earnings Call

“The AI is real, not only real, it’s starting to grow into our daily life... it looks like it’s going to be like an endless, I mean, that for many years to come.” — C.C. Wei, CEO

Independent foundry validation after direct conversations with every hyperscaler and their customers.

Super Micro (SMCI) — Q2 FY2026 Earnings Call

“AI GPU platforms, which represent over 90% of Q2 revenue, continue to be the key growth driver... we are also preparing for upcoming NVIDIA Vera Rubin.” — Charles Liang, CEO

Vera Rubin systems already have committed customer orders for H2 2026 delivery.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

RISKS TO OUR VIEW

Our thesis is not without risks. Here’s what could prove us wrong:

1. Guidance Miss Risk The stock is priced for strong guidance. Any guide below $70 billion for Q1 FY27 would likely trigger a 10-15% selloff regardless of Q4 results. The market has elevated expectations, and disappointment would be punished. Our mitigant: hyperscaler CapEx announcements since Q3 suggest upside, not downside, to guidance.

2. Gross Margin Compression Blackwell ramp costs and rack-scale system complexity could pressure margins below the 75% guide. The transition from Hopper HGX to Blackwell full-scale data center solutions involves new component costs and manufacturing learning curves. Our mitigant: management has flagged this as temporary, with margins expected to improve as Blackwell scales.

3. Custom Silicon Acceleration If hyperscalers accelerate Trainium, TPU, and Maia adoption faster than expected, Nvidia’s share of incremental AI compute spend could decline. Amazon’s Trainium is already a $10B+ business. Our mitigant: every hyperscaler explicitly reaffirmed Nvidia partnership alongside custom silicon. Alphabet confirmed being “among the first to offer” Nvidia’s Vera Rubin platform. These are complements, not substitutes.

4. China Regulatory Reversal Geopolitical tensions could re-freeze H200 exports despite recent approvals. The regulatory environment remains fluid. Our mitigant: China revenue is excluded from guidance entirely — any sales are pure upside, not base case.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

BOTTOM LINE

The bear case rests on abstract fears: bubble comparisons, custom silicon threats, ROI skepticism. The bull case rests on concrete evidence: $660–690 billion in committed hyperscaler CapEx, four CEOs simultaneously declaring supply constraints, TSMC’s CEO personally validating demand after speaking with every major customer, Micron’s HBM sold out with “significant unmet demand,” and $150 billion-plus in AI lab funding raised in early 2026, capital mainly earmarked for Nvidia GPUs.

The supply chain is unanimous. The hyperscalers are unanimous. The neoclouds are unanimous. The only dissent comes from observers who are not writing purchase orders.

Nvidia is the most mispriced mega-cap in technology. At ~26x forward earnings with 50%+ growth, implying the market assigns a massive risk premium that the evidence does not support.

For investors evaluating the setup into Q4 earnings, the asymmetry favors being long. A guidance beat above $74 billion for Q1 FY27 could trigger meaningful re-rating. A guidance miss, which would require demand to have softened despite every hyperscaler announcing increased CapEx, is unlikely given the timing of those announcements. China represents a free call option with substantial upside.

The +$600 billion in committed 2026 hyperscaler capital expenditure is not a ceiling—it is a floor. The AI infrastructure supercycle has not peaked. It is accelerating. And Nvidia remains the indispensable supplier at the center of it all.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Youtube Channel - Jose Najarro Stocks

X Account - @_Josenajarro

AI & CHIP STOCK RESEARCH COMMUNITY — CLICK HERE FOR 33% OFF

Get 15% OFF FISCAL.AI — ALL CHARTS ARE FROM FISCAL.AI —

Disclaimer: This article is intended for educational and informational purposes only and should not be construed as investment advice. Always conduct your own research and consult with a qualified financial advisor before making any investment decisions.